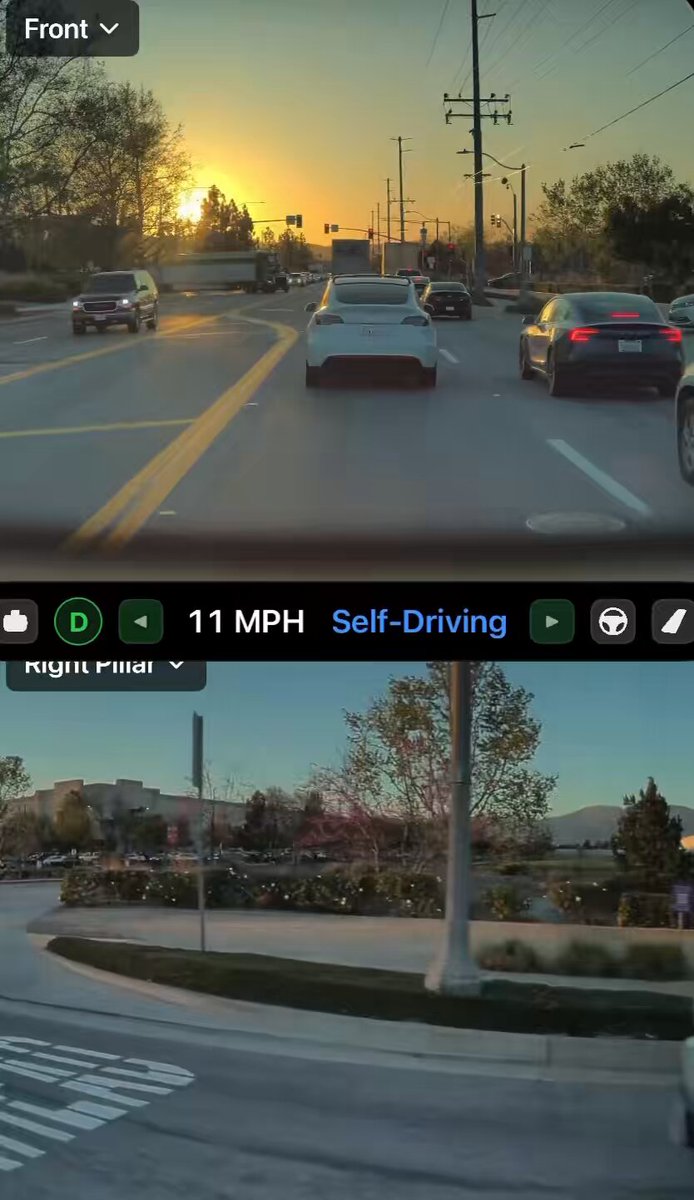

The News: A Tesla owner captured FSD voluntarily yielding to a vehicle exiting a parking lot while stopped at a red light — then proceeding once the maneuver was complete, with zero driver intervention.

Why It Matters: This kind of unscripted, context-aware social driving behavior is exactly what separates a genuinely capable autonomous system from one that just follows lane markings. It signals FSD is getting closer to how a thoughtful human driver actually behaves.

Source: @ray4tesla on X

FSD Does Something a Lot of Human Drivers Won't

Anyone who's tried to exit a busy parking lot during peak traffic knows the feeling — you inch forward, hoping someone will let you in, and most don't. Tesla's Full Self-Driving just showed it's more courteous than the average commuter.

Tesla owner and well-known FSD documenter Ray (@ray4tesla) captured the moment on March 7, 2026. His Tesla was stopped at a red light when FSD detected a vehicle attempting to exit a parking lot on the right. Rather than blocking the exit or ignoring the situation entirely, FSD held its position, allowed the other driver to complete the maneuver, and then pulled forward normally once the path was clear.

Ray described it as "very courteous and patient behavior" — and that framing matters. This wasn't FSD reacting to an emergency vehicle or following a mapped rule. It was the system reading an ambiguous, everyday social situation and making the considerate call.

Why This Behavior Is Harder Than It Looks

Yielding to a parking lot exit while stopped at a red light isn't in any traffic law. There's no sign telling you to do it. It's a judgment call — one that requires the system to understand intent (that vehicle wants to exit), spatial context (there's room to hold back without disrupting traffic behind), and timing (the red light means forward movement isn't required right now anyway).

Earlier FSD versions would have simply held position at the light and treated the exiting vehicle as an obstacle to monitor, not a driver to accommodate. The difference here is the system acting with social awareness, not just collision avoidance.

This aligns with what Tesla has been building toward with FSD v14. According to background research, FSD v14 runs on a neural network that is 10x larger than its predecessors and uses an end-to-end architecture — raw camera inputs feed directly into driving commands, without a rigid rules layer in between. That architecture is precisely what enables emergent behaviors like this one. The system isn't following a "yield to parking lot exits" rule. It's modeling the situation and choosing the most reasonable action.

Tesla also began introducing "reasoning" features with FSD v14.2, with further advancements expected in v14.3. This clip is consistent with that trajectory — the system isn't just reacting faster, it's thinking more contextually. For more on how FSD has evolved, see our FSD coverage.

This Isn't the First Time FSD Has Done This

This isn't an isolated incident. A similar behavior was documented on December 31, 2025, when FSD v14.2 yielded to a vehicle exiting a gas station under comparable conditions — stopped at a red light, right-side exit, no intervention needed. That earlier clip generated significant discussion in the FSD community at the time.

The fact that Ray is capturing it again in March 2026 suggests this isn't a one-off fluke. It appears to be a consistent, repeatable behavior that FSD is now reliably executing in the right conditions.

It's also worth noting that Tesla's official FSD v14 release notes from October 2025 referenced an "enhanced ability to pull over or yield" specifically for emergency vehicles. Yielding for a civilian parking lot exit goes beyond that documented scope — which makes this behavior all the more notable. It's the system generalizing a concept, not executing a hardcoded rule.

🔭 The BASENOR Take

Timeline: FSD v14.2 (active rollout as of March 2026 for HW4 vehicles in North America)

Impact Level: Medium — not a headline feature, but a meaningful signal of system maturity

Confidence: High — corroborated by multiple owner reports across different dates and scenarios

Analysis: The benchmark for autonomous driving has always been "can it handle what a good human driver handles?" Not just the rules — the unwritten ones too. Yielding to someone trying to exit a parking lot is one of those small acts of road courtesy that defines whether you're a considerate driver or just a vehicle following instructions. FSD passing that test, repeatedly and without prompting, is a more meaningful milestone than many of the headline features Tesla announces. It's the difference between a system that drives and one that actually understands driving.

📰 Deep Dive

What makes this clip worth paying attention to isn't the specific maneuver — it's what the maneuver reveals about how FSD is processing the world. The system had no obligation to yield. The red light gave it a natural pause, and holding that pause slightly longer to accommodate another driver required reading intent, not just detecting an object in a zone. That's a fundamentally different kind of computation.

FSD v14's end-to-end neural architecture is designed to produce exactly this kind of emergent, human-like behavior. By training on millions of miles of human driving data — including, presumably, countless examples of drivers courteously holding position for parking lot exits — the model learns the behavior as part of a broader pattern of "what good driving looks like" rather than as a discrete coded rule. The result is a system that generalizes naturally to new situations rather than failing when reality doesn't match a predefined scenario.

For HW3 owners, the wait continues — FSD v14 Lite is anticipated for Q2 2026. But clips like this one make a compelling case for why that upgrade matters. The gap between FSD v13 and v14 isn't just about speed or smoothness. It's about whether the system understands the social contract of driving. Based on what Ray captured this morning, v14 is getting there.

The broader implication for Tesla's autonomy roadmap is significant. Regulators and the public have long questioned whether autonomous systems can handle the ambiguous, unwritten rules of real-world driving. Every clip like this one — documented, timestamped, and repeatable — builds the evidentiary record that FSD is maturing beyond edge-case management into genuine situational judgment. That's the foundation everything else is built on.

Marcus covers Tesla's software releases, FSD rollouts, and OTA changes. Background in automotive engineering. Based in Austin.

Sources verified at publish time. Spotted an inaccuracy? Email editorial@basenor.com.