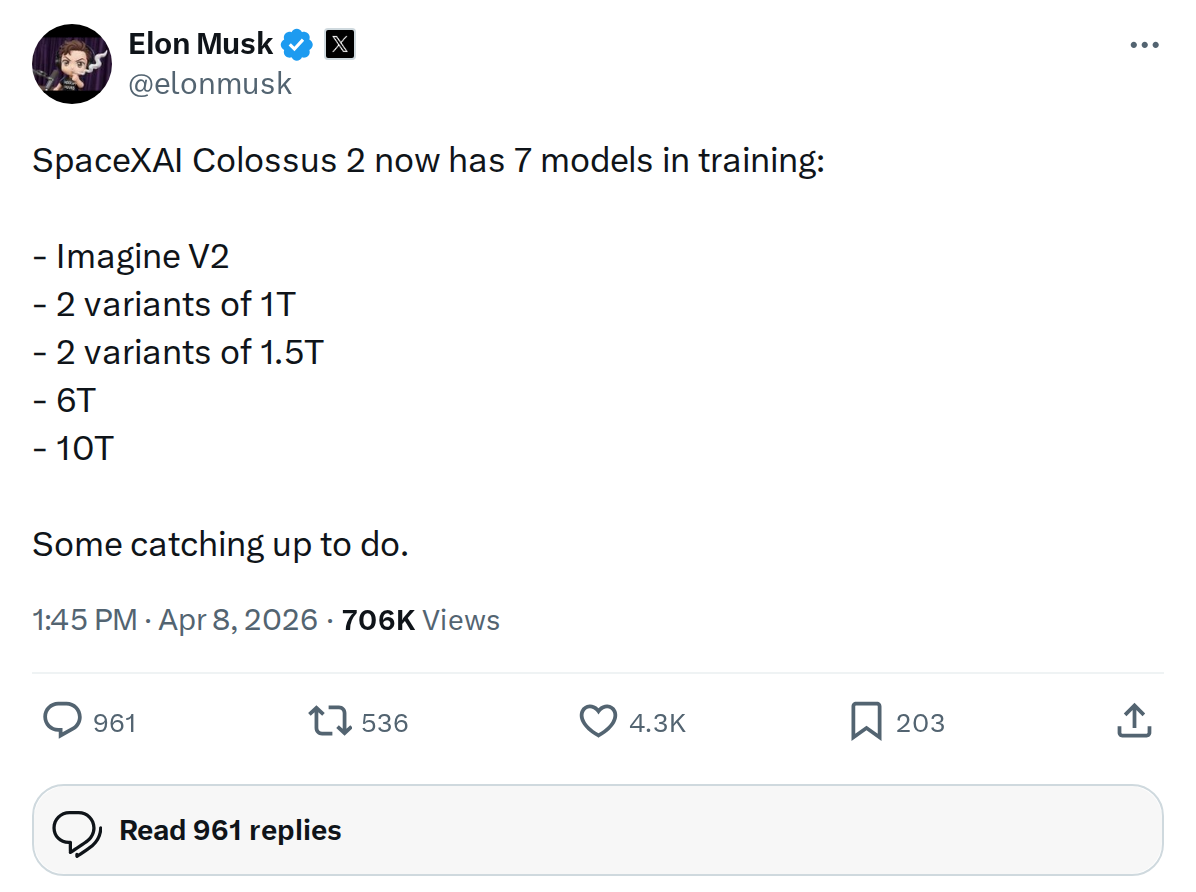

The News: Elon Musk confirmed that SpaceXAI Colossus 2 is actively running seven parallel AI model training jobs, including Imagine V2 and model variants scaling up to 10 trillion parameters.

Why It Matters: This is the clearest signal yet of how aggressively the post-acquisition SpaceX/xAI entity is scaling its AI pipeline — and the models in training today will power Grok, Tesla FSD, and Optimus tomorrow.

Source: @elonmusk on X

SpaceXAI Colossus 2 Is Training 7 AI Models Simultaneously — Including a 10T Giant

In a brief but revealing post on X, Elon Musk confirmed that SpaceXAI Colossus 2 — the world's first gigawatt-scale AI training cluster — is currently running seven distinct model training jobs in parallel. The list spans a wide range of scale, from image generation to what appears to be a 10-trillion-parameter language model. For Tesla owners, this matters: every major AI leap at xAI eventually flows downstream into FSD, Grok in-car, and Optimus.

📊 The 7 Models in Training

Musk's post names the following active training runs on Colossus 2:

| Model | Scale | Category |

|---|---|---|

| Imagine V2 | — | Image generation (next-gen) |

| 1T Variant A | ~1 trillion params | Language model |

| 1T Variant B | ~1 trillion params | Language model |

| 1.5T Variant A | ~1.5 trillion params | Language model |

| 1.5T Variant B | ~1.5 trillion params | Language model |

| 6T | ~6 trillion params | Frontier language model |

| 10T | ~10 trillion params | Frontier language model |

The parameter counts here are striking. For context, GPT-4 is widely estimated at roughly 1.8 trillion parameters. A 10-trillion-parameter model would represent a generational leap in scale — and Colossus 2 is training it alongside six other models simultaneously.

🏗️ The Infrastructure Behind It

Colossus 2 became operational at gigawatt-scale power in January 2026, making it the world's first coherent AI training cluster to reach that threshold. According to verified reporting, the expanded Colossus complex houses approximately 550,000–555,000 NVIDIA Blackwell-series GPUs (primarily GB200 and GB300 chips), with a long-term goal of scaling toward 1 million GPUs.

The cluster currently operates at roughly 1 GW of power — equivalent to the peak electricity demand of a city the size of San Francisco — with plans to expand to 1.5 GW. The Memphis, Tennessee facility (with overflow into Southaven, Mississippi) was built in a record 122 days for the original Colossus, and the xAI team has maintained that pace of aggressive infrastructure deployment.

SpaceX's acquisition of xAI in February 2026 merged these compute resources with SpaceX's operational infrastructure, creating what the company describes as a "vertically-integrated innovation engine." Colossus 2 is the physical backbone of that ambition.

🔭 The BASENOR Take

| Timeline | Active now — training runs ongoing as of April 8, 2026 |

| Impact Level | 🔴 High — affects Grok, FSD, and Optimus roadmaps |

| Confidence | ✅ Confirmed — direct statement from Elon Musk |

The phrase Musk used — "Some catching up to do" — is the most telling part of this post. It's an admission that SpaceXAI is benchmarking itself against external frontier labs and believes it has ground to cover. That competitive framing, combined with the sheer scale of simultaneous training runs, suggests these aren't incremental updates to existing models. The 6T and 10T variants in particular point toward a Grok 5 or beyond that would represent a step-change in capability, not just an incremental release.

The two-variant approach at both 1T and 1.5T scale is also notable. Running parallel architecture experiments at the same parameter count is a classic technique for rapidly iterating on model design — testing different attention mechanisms, training data mixes, or fine-tuning approaches without committing the full compute budget to a single bet. This is how you move fast.

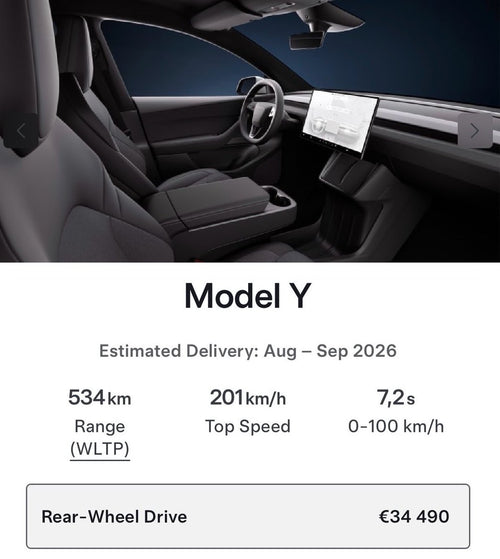

For Tesla owners specifically: the Grok assistant integrated into Tesla vehicles draws directly from this model pipeline. Imagine V2 — the successor to xAI's image generation capability — could eventually power in-car visual AI features. And the frontier-scale models (6T, 10T) are the kind of foundation models that underpin next-generation FSD reasoning and Optimus dexterity. The cars being built today will receive these capabilities over-the-air as they mature out of Colossus 2. Follow our FSD coverage and all software updates to track when these models start shipping to your vehicle.