30-Second Brief

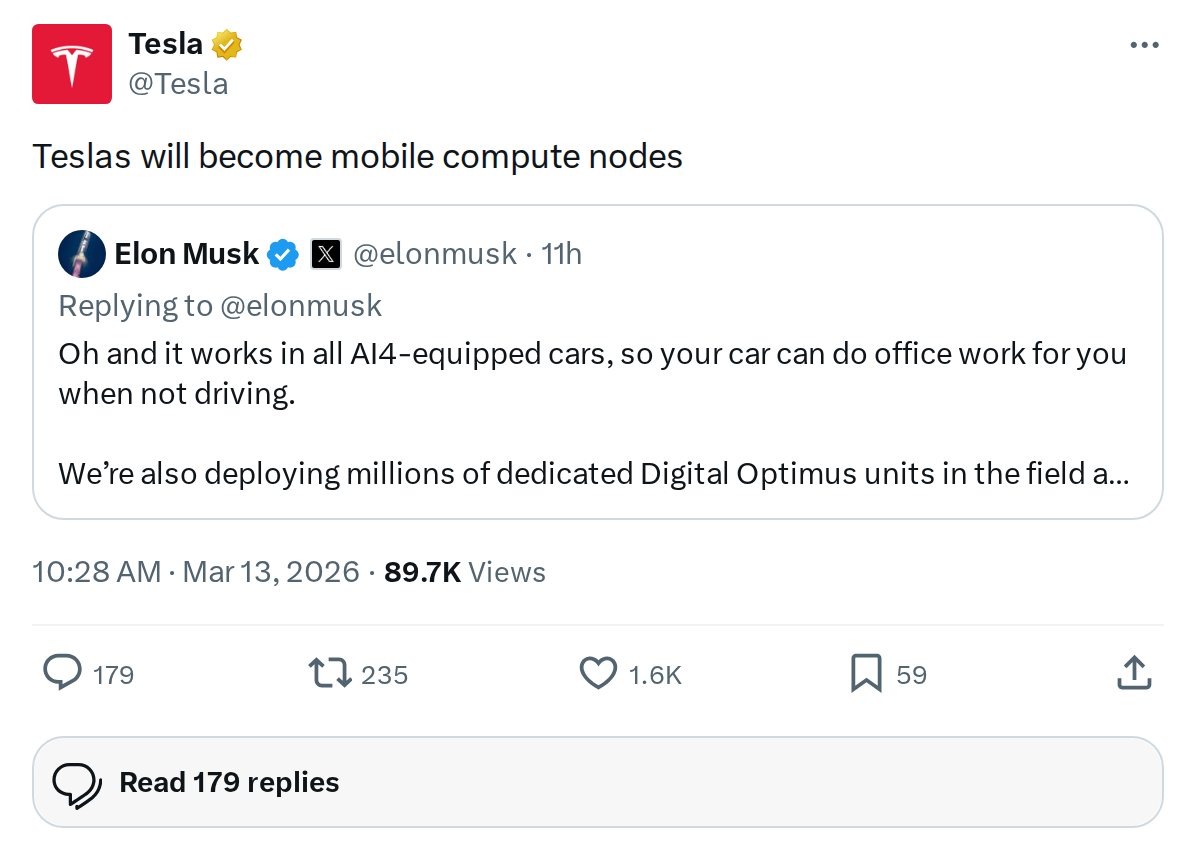

The News: Tesla officially announced that its vehicles will evolve into 'mobile compute nodes,' signaling a strategic push to turn the global Tesla fleet into a distributed AI computing network.

Why It Matters: Your Tesla isn't just a car — it's becoming a node in what could be the world's largest distributed AI supercomputer, with real implications for how onboard hardware is designed and what your vehicle may do while parked.

Source: @Tesla on X

Tesla Vehicles to Become Mobile Compute Nodes: What It Means for Every Owner

Tesla dropped a statement this morning that sounds deceptively simple but carries enormous implications: Teslas will become mobile compute nodes. Five words. Massive strategic shift. Here's what's actually happening — and why it matters to you as a Tesla owner.

📊 Key Figures

| Metric | Value | Context |

|---|---|---|

| Projected fleet size | ~100 million vehicles | Musk's long-term estimate |

| Compute per vehicle | ~1 kW inference | Per vehicle contribution |

| Total network potential | 100 GW distributed | Combined AI inference power |

| Current in-car chip (HW4) | 100–150 TOPS | Samsung 7nm, ships since Jan 2023 |

| Next-gen chip (AI5) | ~8x more compute vs AI4 | Mass production expected 2027 |

| AI5 latency target | Sub-5ms per inference | Under 50W power draw |

| Samsung AI6 deal | $16.5 billion | Manufacturing partnership |

| Chip cadence target | New gen every ~9 months | AI5 → AI6 → AI7 roadmap |

What 'Mobile Compute Node' Actually Means

Strip away the jargon and the concept is straightforward: Tesla wants its vehicles — when parked and connected — to contribute their onboard processing power to a broader AI network. Think of it like a massive, car-based version of distributed computing, where idle compute cycles handle AI inference tasks rather than sitting dormant.

Elon Musk first outlined the scale of this ambition during Tesla's Q3 2025 earnings call. With an estimated 100 million vehicles each contributing roughly 1 kilowatt of inference capability, the combined network could theoretically deliver 100 gigawatts of distributed AI compute power. For context, that's a staggering amount of processing capacity — built not from data centers, but from cars sitting in driveways and parking lots around the world.

The tasks this network would handle include AI inference workloads: pattern recognition, generative AI requests, and other compute-heavy operations that benefit from distributed processing. Crucially, the vehicles' existing power supply, thermal management systems, and connectivity infrastructure would be leveraged — meaning the hardware is already partially in place.

The Hardware Roadmap Making This Possible

Tesla's current in-vehicle compute platform — Hardware 4 (HW4), also known as FSD Computer 2 — has been shipping since January 2023. Built by Samsung on a 7nm process with 20 ARM Cortex-A72 cores running at up to 2.35 GHz, it delivers an estimated 100–150 TOPS of raw AI performance. That's already serious compute for a consumer vehicle.

But the real enabler for the mobile compute node vision is AI5, Tesla's next-generation in-vehicle chip. As of January 2026, Musk described it as 'almost done.' The specs are significant: approximately 8x more raw compute than AI4, 9x more memory, and sub-5ms latency per inference cycle — all while staying under 50W of power consumption. High-volume production is targeted for 2027.

Beyond AI5, Tesla is already in early development on AI6, with Samsung Electronics locked in as the manufacturing partner under a reported $16.5 billion agreement. Tesla's stated goal is a new chip generation approximately every nine months — a pace that, if sustained, would make the in-vehicle compute stack one of the fastest-evolving in the industry.

Sitting alongside this vehicle-side roadmap is the Dojo3 supercomputer project. Restarted in January 2026 after a 2025 pause, Dojo3 is designed to be Tesla's first supercomputer powered entirely by its own silicon — starting with AI5 and incorporating AI6 and AI7 as they arrive. The goal is to reduce dependence on external AI hardware suppliers for large-scale training workloads.

Digital Optimus: The Broader AI Picture

This announcement doesn't exist in a vacuum. On March 11, 2026 — just two days ago — Elon Musk unveiled Digital Optimus (also referred to internally as 'Macrohard'), a joint xAI-Tesla AI project that emerged from Tesla's $2 billion investment agreement with xAI. Digital Optimus is designed to run on Tesla's AI infrastructure. The mobile compute node vision, Dojo3, and Digital Optimus appear to be converging pieces of the same larger strategy: Tesla building a vertically integrated AI ecosystem where vehicles, data centers, and software all reinforce each other.

🔭 The BASENOR Take

Timeline: Vision stated now → AI5 mass production 2027 → Full fleet node capability: 2028 and beyond

Impact Level: 🔴 High — This reframes what a Tesla fundamentally is

Confidence: Medium-High — Hardware roadmap is confirmed; fleet-scale deployment details remain to be seen

Analysis: Tesla's five-word announcement is the clearest signal yet that the company sees its vehicles as computing assets, not just transportation products. The hardware trajectory — HW4 today, AI5 in 2027, AI6 to follow — is specifically engineered to support this. For current owners, the near-term impact is limited: HW4 vehicles aren't being retrofitted into network nodes tomorrow. But for anyone buying a Tesla in 2027 or beyond, the vehicle you're purchasing will likely ship with AI5 and be designed from the ground up to participate in this distributed network. The open questions are significant: What compensation model, if any, exists for owners whose vehicles contribute compute? What are the privacy and data implications? How does battery draw factor in during idle compute sessions? Tesla hasn't answered these yet — but the direction of travel is now officially on record.

📰 Deep Dive

What makes this announcement strategically interesting is the compounding effect of Tesla's vertical integration. Most companies that want distributed computing power have to build data centers or pay cloud providers. Tesla is proposing to build that capacity into a product people are already buying for an entirely different reason — personal transportation. The economics of that, if executed, are unlike anything in the industry.

The 100 GW figure Musk cited at Q3 2025 earnings deserves scrutiny. It assumes 100 million vehicles, each contributing 1 kW of inference — a scale Tesla hasn't reached yet. But the directional logic is sound: as the fleet grows and per-vehicle compute power increases with each chip generation, the aggregate network effect becomes genuinely formidable. AI5's sub-50W power consumption is a key detail here — it suggests Tesla has engineered the chip with idle-state efficiency in mind, not just peak driving performance.

For owners with HW4 vehicles today, the most relevant takeaway is that Tesla's hardware investment decisions are now explicitly tied to this compute node ambition. That means future OTA updates may begin unlocking idle compute features incrementally, well before AI5 vehicles hit the road at scale. Watch for changes in how Tesla manages vehicle connectivity and background processing in upcoming software releases — those could be the first visible signs of this strategy in action. For more on how Tesla's software updates are evolving, see our all software updates coverage.

The broader implication is a fundamental redefinition of vehicle ownership. If your car becomes a productive asset that contributes to an AI network while parked, the relationship between owner, manufacturer, and the vehicle itself changes. Tesla has planted the flag. The details of how that relationship is structured — and whether owners benefit directly — will define whether this vision is as compelling in practice as it is on paper.