The News: Tesla FSD (Supervised) v14 was captured on video detecting an approaching truck that was nearly invisible to the human eye, showcasing the system's 360° camera-based perception.

Why It Matters: This is a real-world demonstration of how FSD v14's upgraded neural vision goes beyond human sight — spotting threats before drivers consciously register them.

Source: @TeslaNewswire on X

Tesla FSD v14 Spots a Nearly Invisible Truck — 360° Vision in Action

A short video circulating this morning shows Tesla FSD (Supervised) v14 doing something that quietly reframes the entire safety argument for AI-assisted driving: it detected an oncoming truck that the human driver almost certainly would not have registered in time. The system flagged it, reacted, and moved on — all without drama. That's exactly how it's supposed to work.

📊 Key Figures

| Metric | Detail |

|---|---|

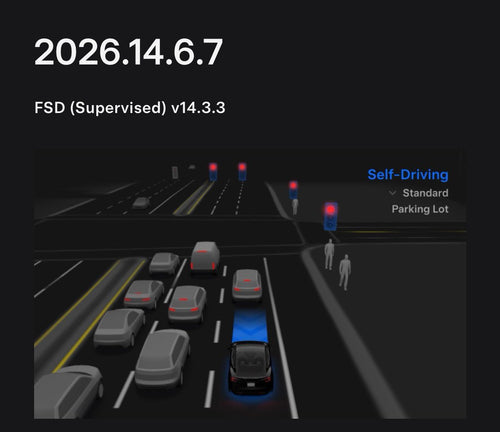

| FSD Version | v14 (latest: v14.2.2.5 in North America as of Feb 14, 2026) |

| Hardware Required | Hardware 4 (HW4 / AI4) — HW3 not supported |

| Camera Coverage | 360° exterior camera array |

| FSD Monthly Price | $99/month (outright purchase no longer available as of Feb 14, 2026) |

| Neural Vision Upgrade | Higher-resolution encoders added in v14.2.1 for improved obstacle detection |

What FSD v14's 360° Vision Actually Means

Tesla's camera-only approach has always been the philosophical bet at the center of FSD. No lidar. No radar as a primary input. Just cameras, compute, and neural networks trained on billions of real-world miles. For years, critics argued that cameras alone couldn't match the reliability of sensor fusion. Clips like this one are Tesla's answer.

The truck in this video wasn't hiding — it was simply in a position where human visual attention would likely have missed or underweighted it. Low contrast, an awkward angle, or partial occlusion are exactly the conditions where human perception degrades. FSD v14's upgraded neural vision encoders, introduced in v14.2.1, were specifically designed to improve detection of obstacles in precisely these edge cases. The system doesn't get tired, doesn't get distracted, and processes all eight camera feeds simultaneously, every fraction of a second.

This matters beyond the wow factor. The practical safety implication is that FSD v14 is building a perception buffer around the driver — catching what human eyes miss before it becomes a crisis. That's the core promise of supervised autonomy, and moments like this are where it becomes tangible rather than theoretical.

What's Under the Hood in v14

To understand why v14 can pull off detections like this, it helps to know what changed architecturally. According to verified information, FSD v14.2.1 introduced upgraded neural network vision encoders with higher-resolution feature maps — a direct improvement to how the system interprets raw camera data before it even reaches the planning and decision layers. Emergency vehicles, unexpected obstacles, and partially obscured objects were called out specifically as beneficiaries of this upgrade.

Beyond raw perception, v14 also integrates routing and navigation directly into the neural network, allowing for real-time replanning rather than relying on a separate map-matching layer. The result is a system that doesn't just see better — it reacts more fluidly to what it sees. Community testers have consistently described v14 as feeling qualitatively different from earlier versions: smoother lane changes, more confident gap acceptance, and fewer of the hesitation moments that made earlier FSD feel mechanical.

One important hardware note: FSD v14 runs exclusively on Hardware 4 (HW4). If your Tesla shipped with HW3, you won't receive v14 FSD updates for the Supervised mode. This is a meaningful split in the fleet and worth knowing if you're evaluating whether your current vehicle can access these perception improvements. For our full breakdown of FSD coverage and what each version brings, check the linked archive.

🔭 The BASENOR Take

Timeline: FSD v14 has been rolling out since late 2025, with v14.2.2.5 reaching North American vehicles around February 14, 2026. This clip represents real-world v14 behavior, not a staged demo.

Impact Level: 🟠 High — not a headline feature announcement, but a ground-level proof point that the perception architecture is working as intended in unpredictable conditions.

Confidence: 🟢 High — the video is the evidence. The background context on v14's neural vision upgrades is verified from Tesla's own release notes.

Analysis: Single clips don't prove a system is ready for full autonomy. But they do reveal capability floors — what the system can do at minimum in a given scenario. A truck detection that a human would have missed isn't a party trick; it's a signal that the perception model is operating above the baseline human driver in at least some conditions. As Tesla accumulates more of these moments across its fleet, the statistical case for supervised autonomy gets harder to dismiss. The camera-only bet is looking more defensible with each v14 update.

📰 Deep Dive

The broader context here is Tesla's multi-year commitment to a vision-only approach to autonomous perception. While competitors have leaned on lidar for its precise depth mapping, Tesla's argument has always been that the world is built for human eyes — cameras trained well enough should be able to navigate it. FSD v14 represents the most mature expression of that thesis to date, and detections like the one in this clip are the empirical evidence that the approach isn't just philosophically coherent — it's functionally effective.

What's worth watching in the coming months is how frequently these perception edge cases get documented by the community. FSD v14 is still supervised, meaning a driver must remain attentive and ready to intervene. But the gap between what the system catches and what a human would catch in the same moment appears to be widening in FSD's favor for specific threat categories — low-visibility obstacles, unusual approach angles, and fast-moving vehicles entering the camera's peripheral zones. That's meaningful progress.

For HW4 owners currently on FSD v14, the practical takeaway is straightforward: the system's perception layer is doing real work even when the road looks clear to you. Staying engaged as the supervisor doesn't mean second-guessing every detection — it means being ready to act if the system's response ever needs human override. The truck clip is a reminder that the partnership between driver and AI is still active, and right now, it's working.

Sarah focuses on Tesla Energy, SpaceX missions, and the broader Musk AI portfolio. Former data analyst in clean energy. Based in San Francisco.

Sources verified at publish time. Spotted an inaccuracy? Email editorial@basenor.com.