30-Second Brief

The News: Electrek editor-in-chief Fred Lambert is calling out an official Tesla North America tweet that promoted a Cybertruck owner testimonial — a vision-impaired individual whose ophthalmologist reportedly recommended Tesla with FSD — warning the post could become evidence in future FSD crash litigation.

Why It Matters: FSD remains a Level 2 driver-assist system that legally requires full driver attention and responsibility. Marketing that implies otherwise creates serious liability exposure — for Tesla and potentially for owners who misunderstand what FSD can and cannot do.

Sources: @FredLambert (1) · @FredLambert (2)

Tesla FSD Marketing Under Fire: Vision-Impaired Driver Testimonial Sparks Legal Warning

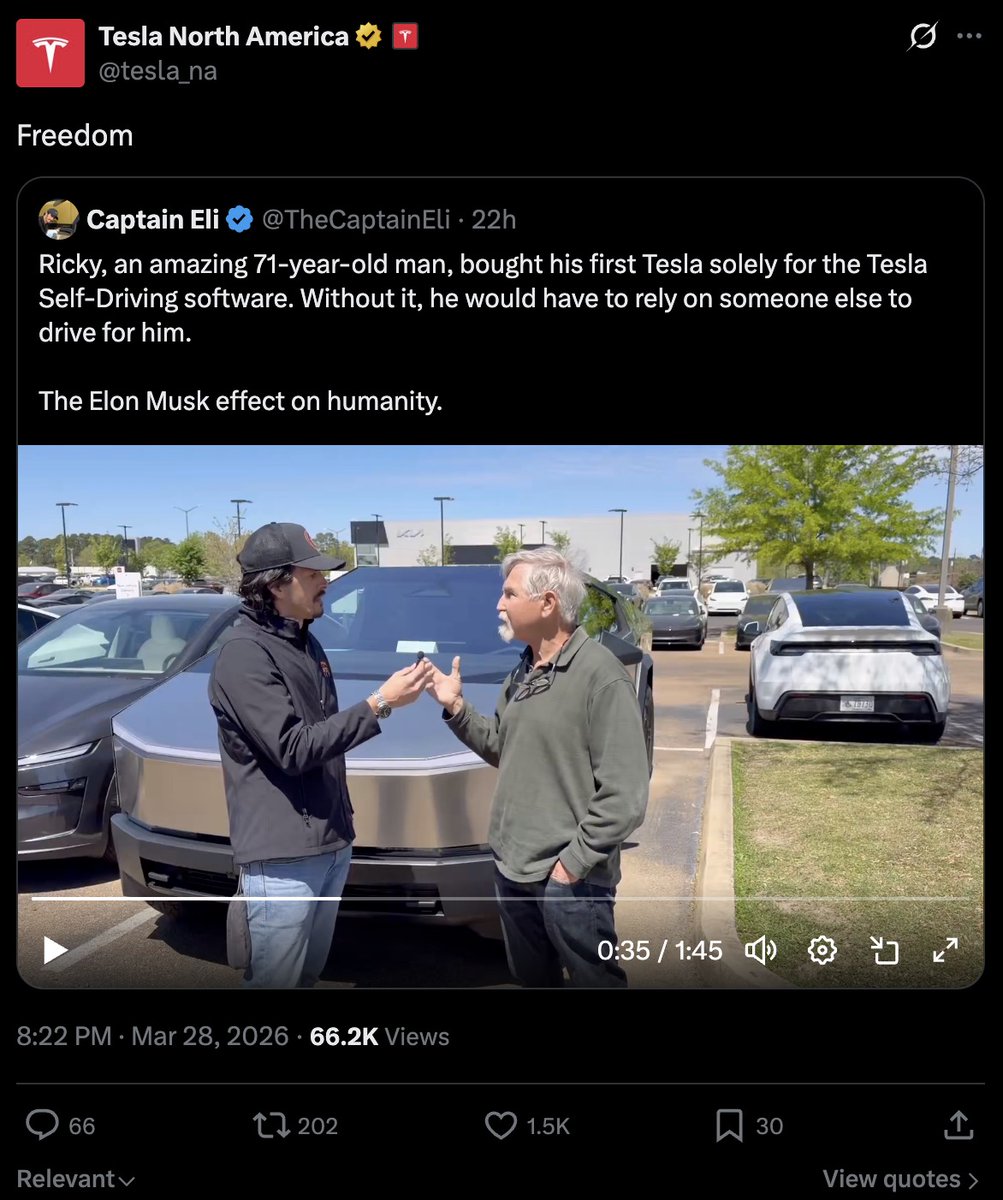

A single tweet from Tesla's official North America account has ignited a fierce debate about the boundaries of FSD marketing — and one of the EV industry's most prominent journalists is warning it could end up in a courtroom. The controversy centers on a video testimonial from a new Cybertruck owner who says his ophthalmologist recommended a Tesla with Full Self-Driving because of his deteriorating eyesight. Tesla's official account amplified it. Fred Lambert, editor-in-chief of Electrek, says that was a serious mistake.

What Tesla Actually Promoted

According to Lambert's reporting, the original Tesla North America tweet featured a Cybertruck owner explaining that his ophthalmologist — an eye doctor — had recommended he purchase a Tesla equipped with FSD specifically because of his worsening vision. The implication embedded in that testimonial is clear: FSD can compensate for a driver's impaired ability to see the road.

That framing is the problem. FSD, as Tesla's own documentation states, is a SAE Level 2 driver-assistance system. It is not autonomous. It does not eliminate the need for a fully attentive, legally capable driver behind the wheel. The driver remains responsible for the vehicle at all times — including monitoring the road, overriding the system when necessary, and maintaining the physical and legal capacity to drive.

Promoting a testimonial that frames FSD as a solution for someone with deteriorating eyesight cuts directly against that classification.

Lambert's Core Warning: This Is Future Courtroom Material

Lambert didn't mince words. His initial reaction — "Absolute Madness!!!!" — was followed by a pointed legal prediction: a plaintiff's attorney will one day hold up this tweet and tell a jury that Tesla's own official account promoted a testimonial from a vision-impaired driver as an endorsement of FSD's capabilities.

In his follow-up, Lambert was careful to separate the technology's long-term promise from its current reality: "I think autonomous driving has incredible potential to give people with disabilities more freedom, including vision-impaired people, but FSD is not there yet. Tesla itself says FSD is a level 2 driver-assist system and the driver is always responsible."

That distinction is critical. The vision of fully autonomous vehicles enabling independence for people with disabilities is genuinely compelling — and widely shared across the industry. But marketing a Level 2 system as though it delivers that vision today is a different matter entirely.

📊 Key Figures

| Metric | Value | Context |

|---|---|---|

| FSD Autonomy Level | Level 2 | Per Tesla's own classification |

| Driver Responsibility | 100% | At all times, per Tesla's terms |

| Tweet Engagement | 1,032 views | Lambert's warning post, within hours |

🔭 The BASENOR Take

Lambert's concern is not hypothetical. Tesla has faced — and continues to face — litigation related to FSD and Autopilot incidents. In those cases, plaintiff attorneys routinely comb through Tesla's marketing materials, social media posts, and public statements to establish a pattern of overclaiming. An official Tesla account amplifying a testimonial that frames FSD as appropriate for a driver with deteriorating eyesight is precisely the kind of exhibit that can shift a jury's perception of corporate intent.

The tension here is real and it isn't going away. Tesla is simultaneously trying to sell FSD subscriptions and build public enthusiasm for a future of full autonomy, while legally obligated to classify the current product as Level 2. Those two goals pull in opposite directions when it comes to marketing. Every testimonial that leans too far toward the autonomous future — without clearly anchoring it in the Level 2 present — adds to a growing body of material that opposing counsel can use.

For owners who use FSD, the takeaway is straightforward: the system is a powerful tool, but it demands your full attention every single time you engage it. No ophthalmologist's recommendation changes that legal and practical reality. If you are not in a condition to monitor and override the system at any moment, you should not be using it — regardless of what any marketing material implies.

Whether Tesla removes or qualifies the original tweet remains to be seen. But Lambert's warning stands on its own merits: in the current legal environment surrounding autonomous driving, official marketing that blurs the line between Level 2 assistance and genuine autonomy is not just bad optics. It is a liability waiting to be activated.

Marcus covers Tesla's software releases, FSD rollouts, and OTA changes. Background in automotive engineering. Based in Austin.

Sources verified at publish time. Spotted an inaccuracy? Email editorial@basenor.com.